- Home

- Weddings

- Portraits

- Journal

- Contact

- An overview of still image compression standards -pdf

- Where is the r trademark symbol

- Pip python 3 install

- Microsoft office 2000 professional

- Windows xp iso for vmware workstation

- Sacred gold patch torrent

- Import ics into outlook 2013 calendar

- Rick and morty season 1 episode 1 adultswim

- Hp ilo 4 how to check hard drive

- Microsoft onedrive backup

The literature used a manually set distortion weight map to control the bit-rate allocation and assigned a larger distortion weight to the region of interest in the image, thus enhancing the quality of the region of interest.

The literature used importance maps to guide image compression based on generative adversarial network loss functions for enhancing the subjective image quality. The literature proposed a parallelizable checkerboard grid context model and changed the decoding order so as to speed up decoding without breaking performance.

In the literature, the autoregressive module was introduced, and the autoregressive and hyperprior modules complement each other to improve the entropy estimation. The entropy estimation model with hyperprior was first proposed in the literature to capture the hidden information of latent representations, aiding the generation of entropy model parameters and improving the mismatch between entropy model and hidden marginal distributions. A nonlocal model was used in the literature, and a CNN-based wavelet transform was used in the literature to reduce redundancy among pixels. Classical learning-based image compression algorithms use a variational autoencoder structure, and significant progress has been made in various technical components of this architecture, including the use of generalized divisive normalization (GDN) modules in nonlinear transformers, which has been validated for probabilistic modeling and image compression tasks. At the same time, the joint optimization decoder decodes the latent representations into images. These latent representations are then entropy coded through statistical redundancy, and lossless compression is performed through entropy coding to create bitstreams. A further important task is to design the quantization module, which has a significant impact on compression performance.Ī classical deep learning-based image compression structure converts images into compressible latent representations by stacking convolutional neural networks. A good entropy model can better suit the true distribution of an image. In addition, accurate entropy estimation is one of the pivotal factors to improve the performance of image compression. In contrast to the traditional methods, where a linear transform module is replaced by a nonlinear neural network, the performance of image compression methods is determined by how the network structure is constructed to produce more compact latent features. Thanks to the advancement of deep learning, this also gives new ideas for image compression methods. Traditional compression algorithms, on the other hand, lack learning capabilities.

For example, JPEG employs the discrete cosine transform (DCT) to eliminate redundancy among pixels.

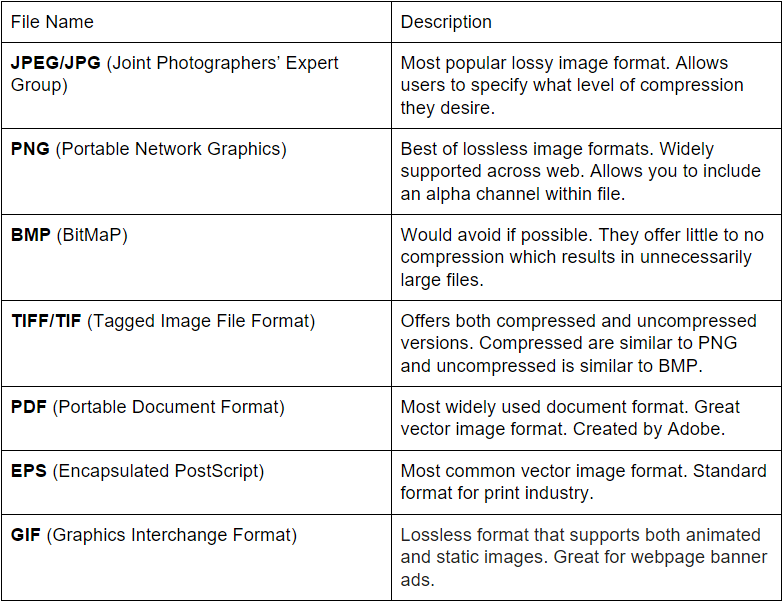

An overview of still image compression standards .pdf manual#

Traditional image compression methods have achieved better performance through finely designed manual features and complex processing. Therefore, image compression is crucial in computer vision, and the trend of information technology has put forward higher demands on image compression efficiency. For example, an original RGB image with a resolution of 512 × 768 has a theoretical storage size of about 1.125 MB, and after compression, the storage size of the image is only one-sixtieth of the original image or even smaller. In the age of information technology, pictures have become important information carriers, and massive amounts of image data can lead to enormous transmission and storage pressures. The experimental results show that the peak signal-to-noise rate (PSNR) and multiscale structural similarity (MS-SSIM) of the proposed method are higher than those of traditional compression methods and advanced neural network-based methods. On the decoding side, we also provide a postprocessing enhancement module to further increase image compression performance. In addition, we propose a solution to the errors introduced by quantization in image compression by adding an inverse quantization module. This study embeds hybrid domain attention modules as nonlinear transformers in both the main encoder-decoder network and the hyperprior network, aiming at constructing more compact latent features and hyperpriors and then model the latent features as parametric Gaussian-scale mixture models to obtain more precise entropy estimation. To address these issues, the study suggests an image compression method based on a hybrid domain attention mechanism and postprocessing improvement. Both the inaccurate estimation of the entropy estimation model and the existence of information redundancy in latent representations lead to a reduction in the compression efficiency.

Deep learning-based image compression methods have made significant achievements recently, of which the two key components are the entropy model for latent representations and the encoder-decoder network.